Intel and SambaNova team up on heterogenous AI inference platform — different hardware performs different workloads

⚡ Quick Hits

**

- Intel and SambaNova are collaborating to create a hybrid, highly efficient AI inference solution.

- The system uses heterogeneous computing to delegate different AI tasks to specialized hardware components.

- The architecture is heavily optimized for running major large language models, including those from Meta.

**

**

Greetings, tech enthusiasts. The Tech Monk here to bring you the latest evolution in AI infrastructure.

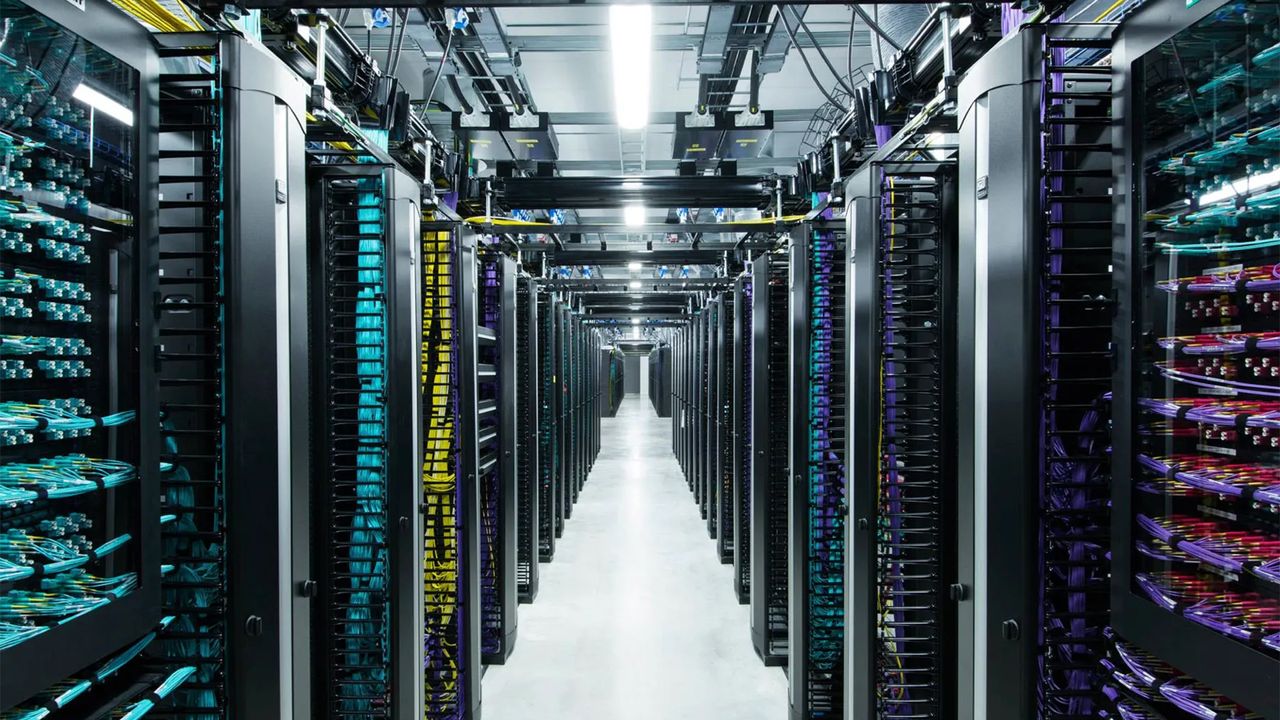

In the rapidly expanding world of artificial intelligence, a one-size-fits-all hardware approach is no longer cutting it. Recognizing this, Intel and SambaNova have officially teamed up to develop a heterogeneous AI inference platform. Instead of relying on a single type of processor to handle every computation, this collaborative system plays to the strengths of different silicon architectures, routing specific AI workloads to the hardware best equipped to process them.

While details remain tightly focused on the synergy between Intel's robust enterprise ecosystem and SambaNova's specialized AI accelerators, the overarching goal is efficiency and speed. By dividing and conquering the compute pipeline, this architecture drastically reduces bottlenecks when running massive, compute-heavy open-source models—most notably, those developed by Meta.

For enterprise users and developers looking to scale their AI operations, this partnership signals a major leap forward. As AI models become more complex, heterogeneous platforms like this one will become the gold standard for achieving high-throughput, low-latency inference without breaking the bank on power consumption. Stay tuned, because the hardware wars are getting incredibly smart.