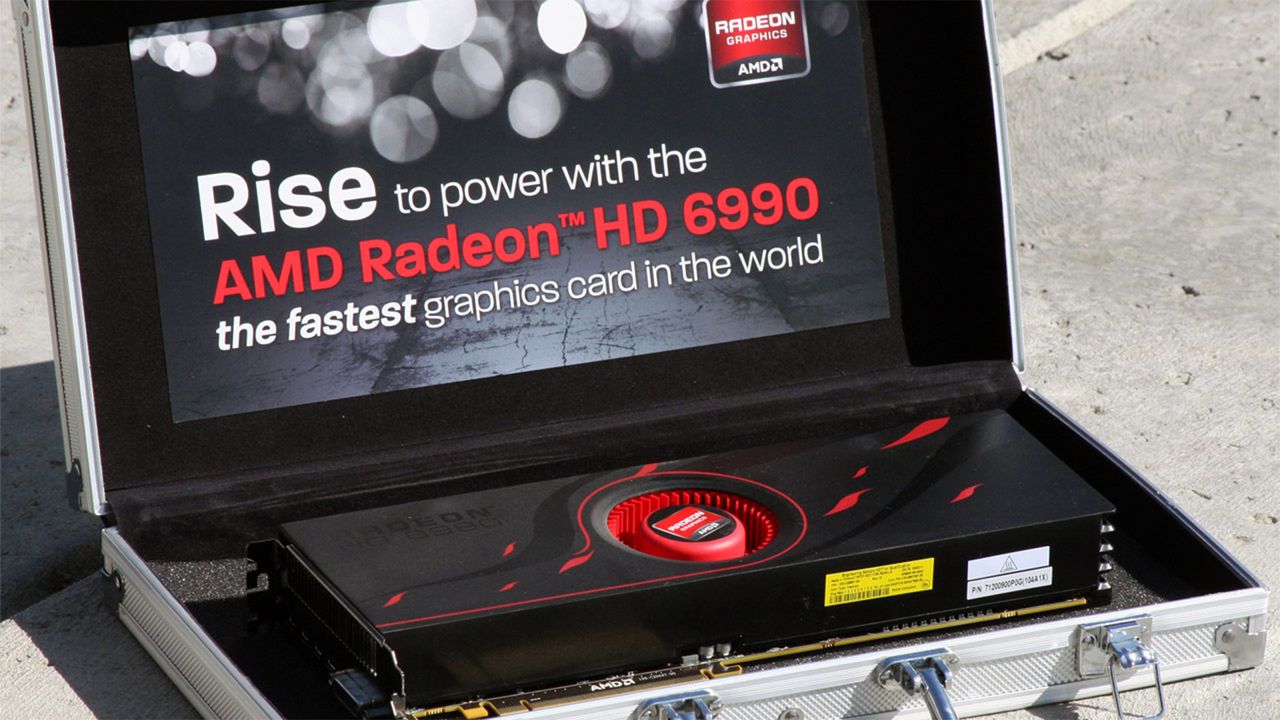

AMD’s dual-GPU Radeon HD 6990 launched 15 years ago — power, heat, and noise monster was crowned the fastest graphics card in the world

⚡ Quick Hits

- Launched 15 years ago and crowned the fastest graphics card in the world at the time.

- Featured a groundbreaking but highly demanding dual-GPU architecture.

- Remembered historically as a "monster" for its massive power draw, extreme heat output, and jet-engine acoustics.

Welcome back, tech enthusiasts. The Tech Monk here, bringing you a blast from the past that still echoes in the halls of hardware history. Today, we're taking a nostalgic dive into a true titan of the PC building world: the AMD Radeon HD 6990.

Fifteen years ago, the graphics card landscape was forever changed when AMD decided to push the boundaries of extreme performance. Instead of just tweaking a few clock speeds, they went all out and strapped two massive GPUs onto a single PCB. The result was a glorious, uncompromising monster of a card that instantly claimed the undisputed crown as the fastest graphics card on the planet.

But holding that crown came at a steep cost. The Radeon HD 6990 is remembered today as much for its physical footprint as its frame rates. It was a notoriously power-hungry beast that generated immense heat, requiring a cooling solution that sounded more like a jet engine taking off than a standard PC component.

Despite the noise and thermal challenges, the HD 6990 stands as a testament to an era of raw, unbridled hardware ambition—a time when pure, brute-force performance mattered far more than power efficiency. It remains a legendary piece of tech history for those who lived through the golden age of dual-GPU behemoths.